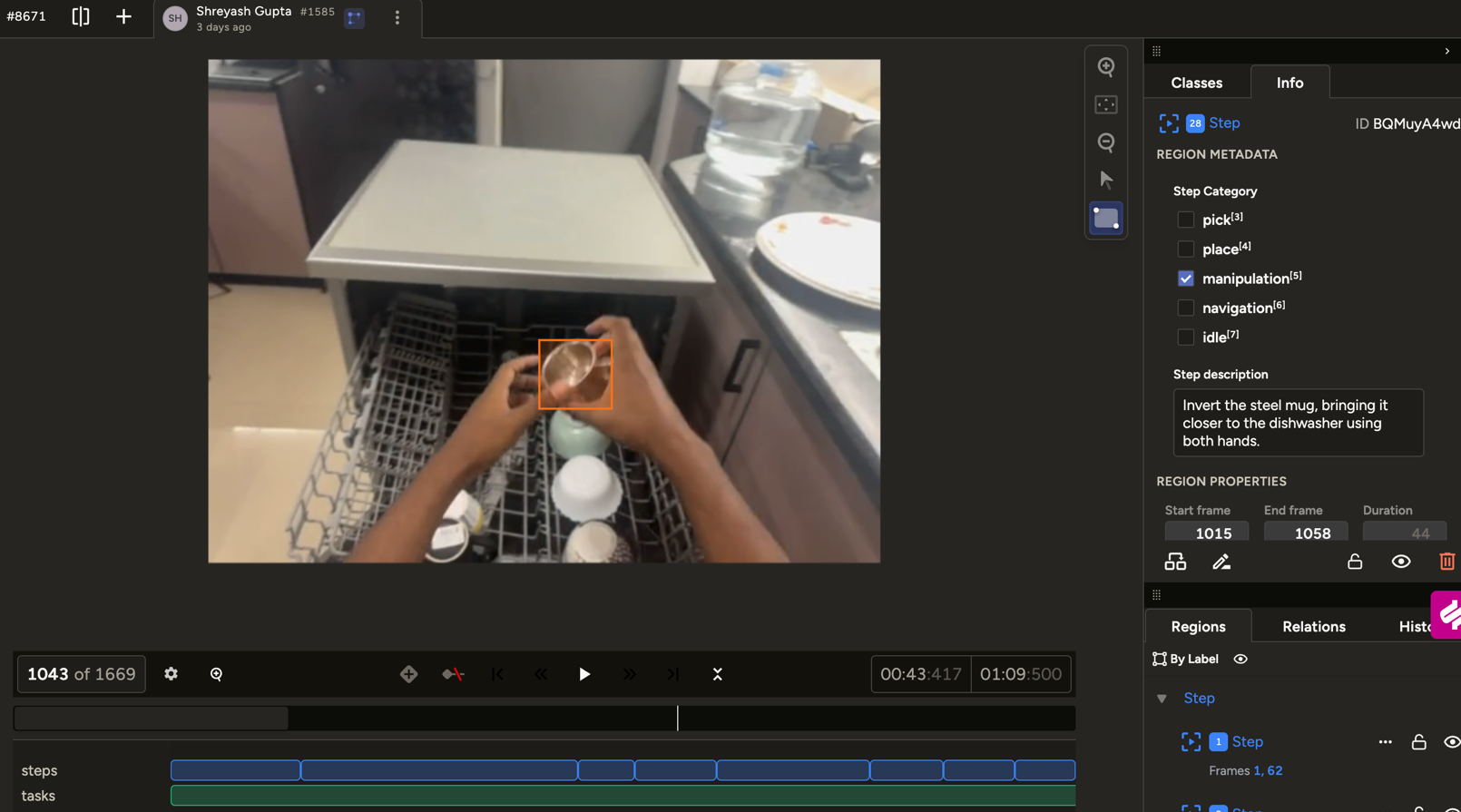

Dense action labels

Frame-accurate action segmentation over long-horizon manipulation. Scene context, object state, and contact sequences, annotated as the model will consume them.

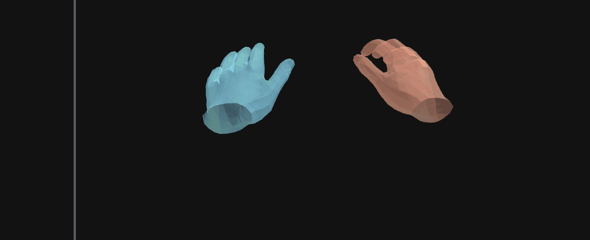

2D → 3D hand tracking

Millimeter-level 3D hand reconstruction from 2D egocentric video. Stable under self-occlusion and close-range object interaction, the regime where off-the-shelf pose fails.

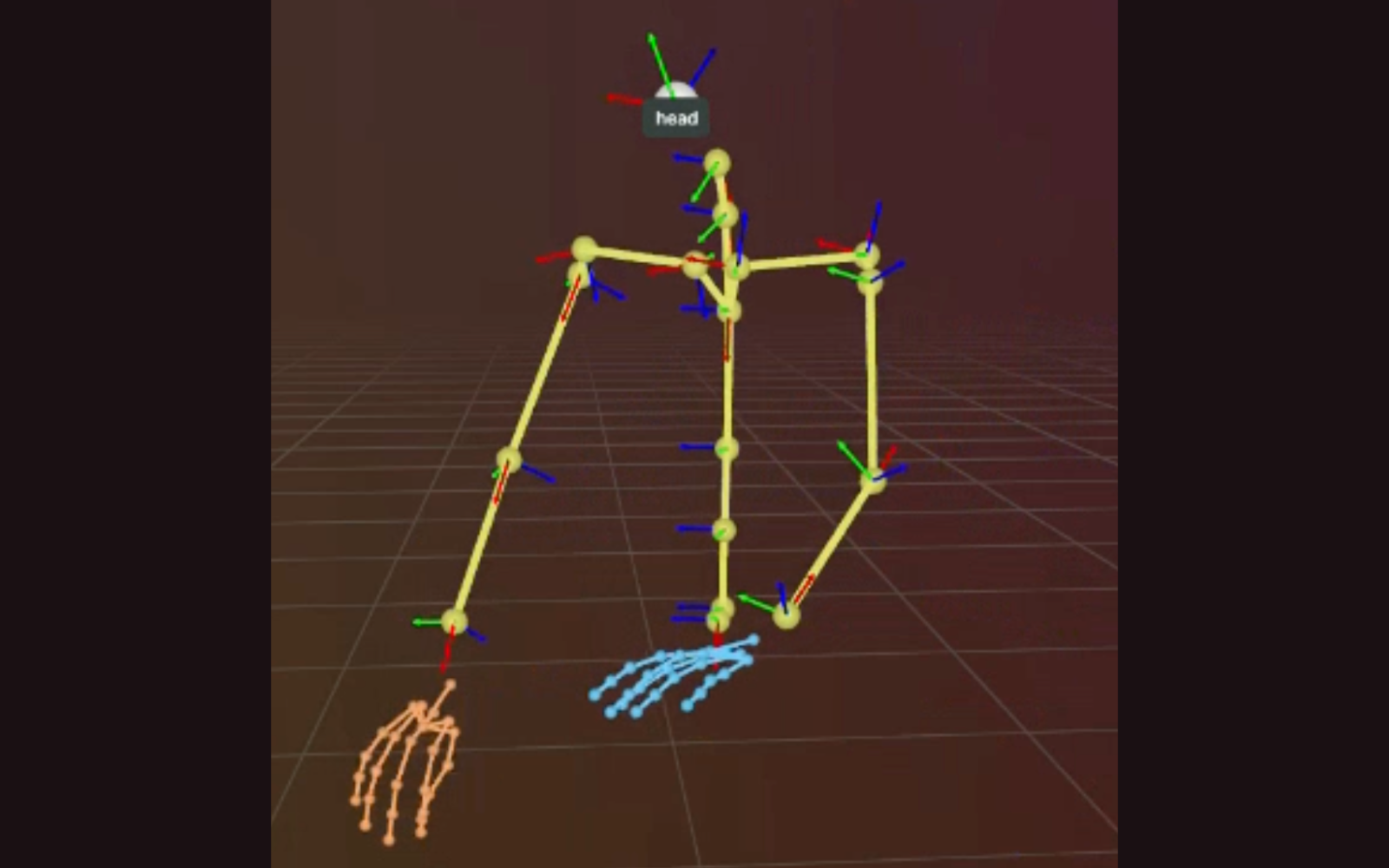

2D → 3D body pose

Full-body pose reconstruction from a single egocentric camera. Physics-aware, temporally smooth, with root trajectory, the rig a policy can actually consume.